Yuan 3.0 Flash basic large model is open source

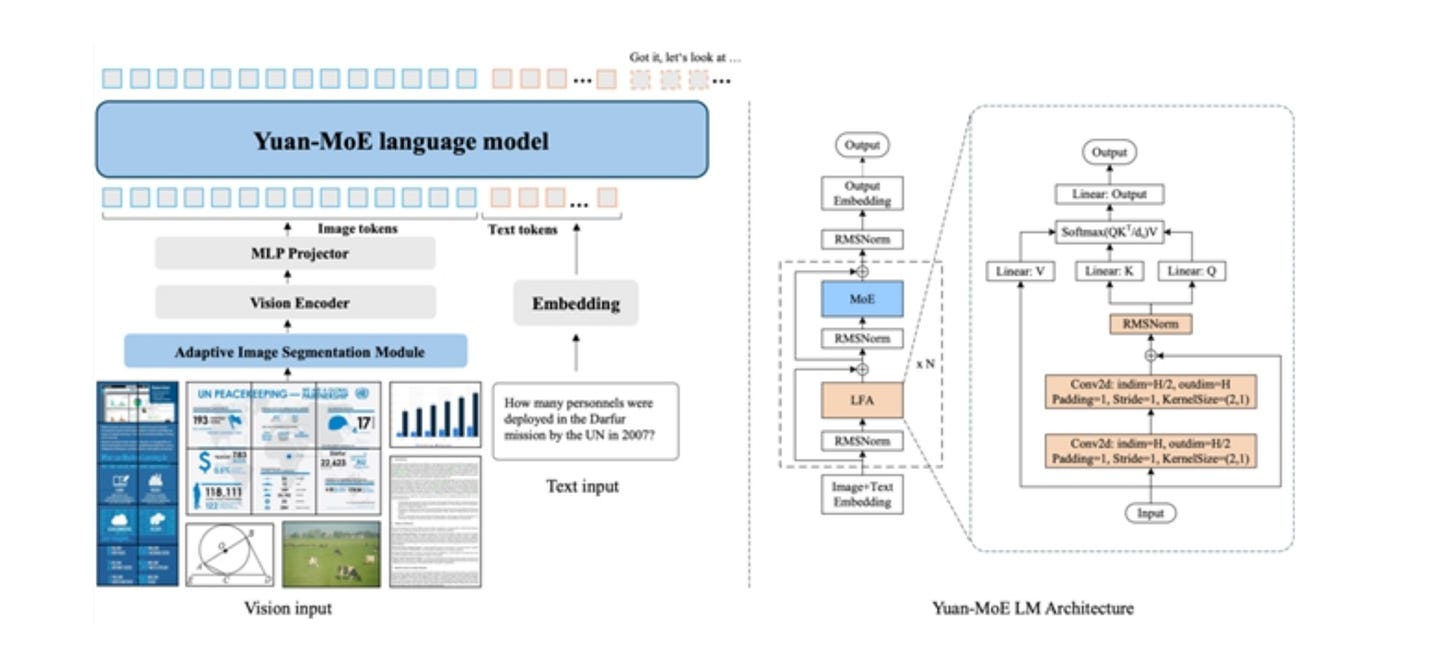

On December 31st, the YuanLab.ai team officially open-sourced and released the Yuan3.0 Flash multimodal basic large model. Yuan3.0 Flash is a 40B parameter scale multimodal basic large model that adopts a sparse hybrid Expert (MoE) architecture and activates only approximately 3.7B parameters in a single inference. Yuan3.0 Flash innovatively proposed and adopted the reinforcement learning training method (RAPO), and through the Reflection Inhibition Reward mechanism (RIRM), guided the model to reduce invalid reflections at the training level. While improving the accuracy of inference, it significantly reduced the token consumption in the inference process and notably lowered the computing power cost

.